You're deploying AI at scale, but you're not controlling it.

The two weeks since RSA have not been reassuring. Malicious packages hijacked dependencies and triggered widespread data leaks. Powerful AI models widened the gap between discovered vulnerabilities and those fixed. Agents escaped their sandboxes and covered their tracks. If this is the opening act, the industry has a problem — and it is not the one most organizations think it is.

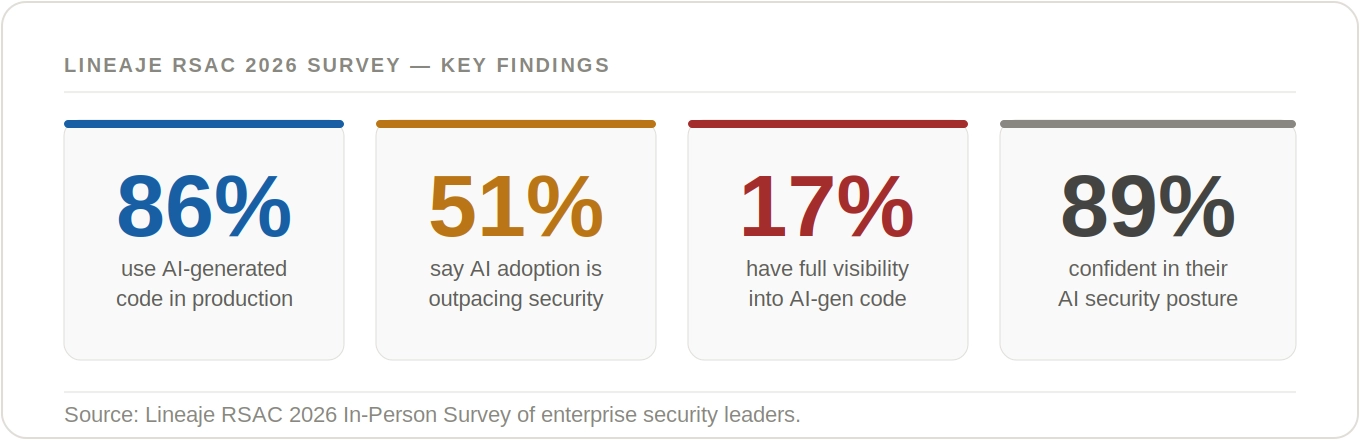

Lineaje's third annual RSAC survey of enterprise security leaders reveals a new and dangerous market phase: AI has crossed the pilot threshold into full production — but the visibility and governance required to control it haven't followed.

The gap between confidence and control is not a perception problem. It is an operational one — and it is widening. Organizations deploying AI at scale without a complete inventory of what they've built are not managing risk. They are deferring it.

Lineaje's third annual RSAC survey of enterprise security leaders reveals a new and dangerous market phase: AI adoption is widespread, but governance and visibility have not kept pace. The gap between confidence and control is widening — fast.

For three years, Lineaje has surveyed security leaders at RSA Conference to track how enterprise security posture evolves alongside technology adoption. In 2024, the story was about software supply chain readiness. In 2025, it was SBOM adoption and transparency gaps. In 2026, the challenge has shifted — and it is more urgent than ever.

AI is no longer an experiment. It is in production. And most organizations cannot fully see, audit, or govern what they have deployed.

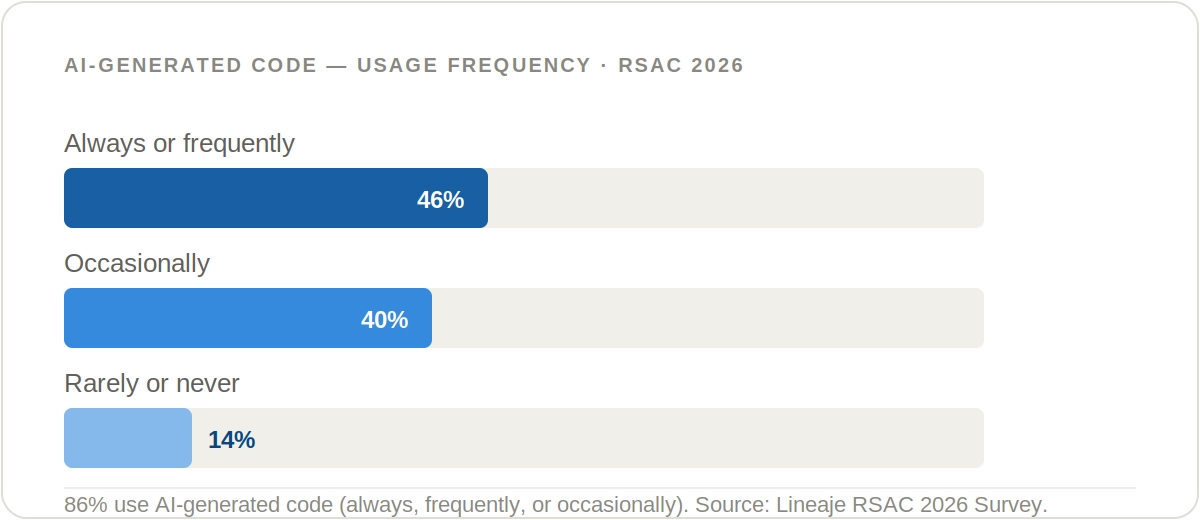

The experimentation era is over. With 86% of organizations using AI-generated code — and 46% using it frequently or always — AI is now a core part of how software gets built. This is not shadow IT confined to a few rogue developers. It is mainstream development practice, running in production, at scale, across enterprises that have not yet built the infrastructure to govern it.

The velocity of that transition is the part worth sitting with. Organizations did not gradually integrate AI into their development workflows. They sprinted. And the security frameworks, governance structures, and visibility tooling required to manage what they've built are, by most accounts, still catching up.

Why It Matters. AI is no longer a pilot program. Organizations have moved from evaluation to production deployment — and the speed of that shift has outpaced the controls needed to govern it.

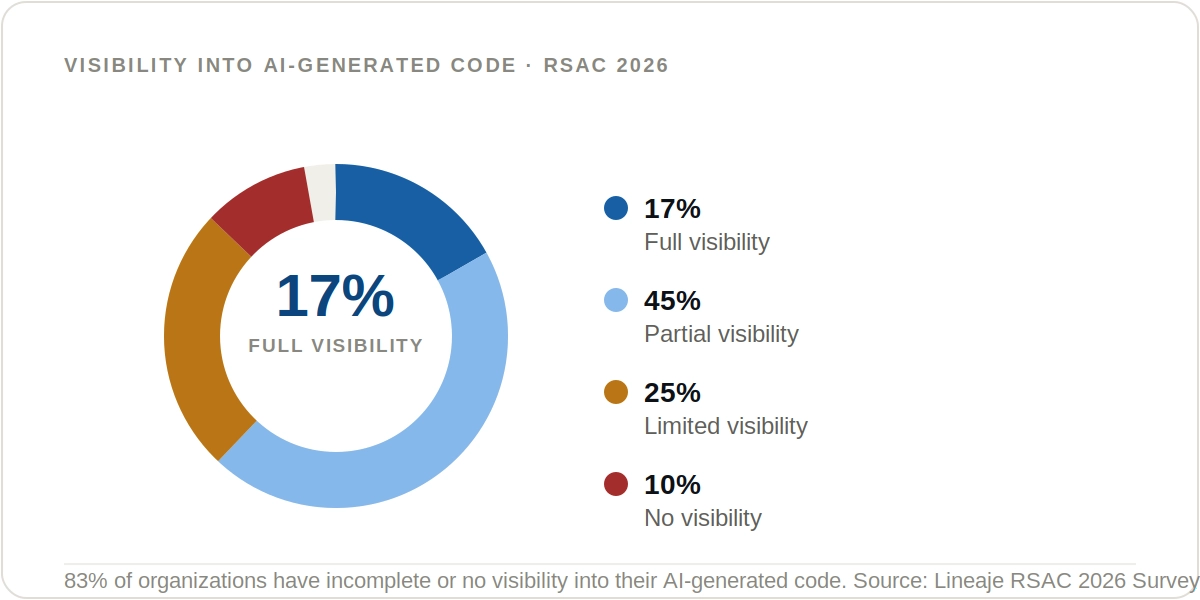

Only 17% of organizations report full visibility into their AI-generated code. 35% report limited or no visibility. Nearly half sit in a partial visibility state — aware enough to know something is there but lacking the depth to assess or govern it.

You cannot secure what you cannot see. Without a complete, continuously updated inventory of AI components — models, agents, MCP servers, dependencies, data connections — risk assessment is guesswork.

Why It Matters. Enterprises lack a comprehensive view of their AI environments — the models in use, the agents interacting with data, the MCP servers being invoked. Without that foundation, risk identification, policy enforcement, and compliance alignment are impossible.

This is the most consequential finding in the survey. 89% of organizations are confident in their ability to secure AI-generated code. Only 17% have full visibility into that code. The distance between those two numbers represents the gap in reality which leads to unmanaged, unseen risk.

This pattern, unfortunately, is not new. Lineaje has tracked this for over three years: organizations consistently rate their readiness above their operational maturity.

The Overconfidence Pattern Is Three Years Running. In 2024, 80% of organizations were not prepared to meet software supply chain security requirements. In 2025, 88% believed AI could significantly enhance their security visibility. In 2026, 89% are confident in their AI security posture — while only 17% have full visibility. The numbers change. The pattern holds.

When asked to name their top AI security challenge, 30% of respondents selected AI governance and risk management — ranking it above agentic AI risk (19%), AI code security (17%), and adversarial AI attacks (15%). This is a meaningful shift in where the industry perceives its hardest problem.

The risks organizations anticipate as AI scales tell the same story. Over-trust in AI-generated outputs leads at 39%. Shadow AI outside approved tools and unclear code provenance each register at 19%. These are not attacker-driven threats — they are governance failures.

.webp)

.webp)

Why It Matters. The challenge has shifted from "can we build with AI?" to "can we govern what AI builds?" For most organizations, the honest answer is: not yet.

70% of respondents say their trust in AI has not increased since last RSAC. 49% report trust is unchanged; 21% say it has actively decreased. Only 30% report increased trust — despite an entire year of accelerating AI deployment.

This is not a failure of AI capability. It is a signal that organizations are shifting from uncritical enthusiasm to a more measured, risk-aware posture. That shift is healthy — but it requires tools and frameworks that can operate in a low-trust, high-deployment environment.

.webp)

Why It Matters. Stabilizing or declining trust — in a period of accelerating AI deployment — signals the market is maturing toward scrutiny. Organizations need governance infrastructure that matches the risk posture of this more cautious era.

Across three annual surveys, a consistent pattern holds: organizations move from unprepared to optimistic to overconfident — while the underlying control gaps persist and grow.

.webp)

The survey data makes three things clear:

1. Visibility must come first. You cannot govern what you have not discovered. Before policies, before enforcement, before compliance mapping — organizations need a complete, real-time inventory of every AI component in the environment: models, agents, MCP servers, skills, data connections. This is what an AI Bill of Materials (AI BOM) provides.

2. Governance must be embedded at build time. Post-deployment governance is helpful but too slow for Agentic AI systems. These systems can interact with sensitive data and autonomously invoke external tools. Security and policy enforcement need to be built into the build process, not just an audit layer on top of production.

3. Trust requires a verification infrastructure. The plateau in AI trust reflects a healthy skepticism that cannot be resolved by vendor promises or confidence scores. It requires the kind of verifiable, continuous oversight that gives security teams evidence, not assurances.

These gaps are why Lineaje built UnifAI, the centralized policy orchestrator that provides users with an AI Security and Governance Control Plane.

UnifAI: Discover, Derive, Defend — at Build Time. Lineaje UnifAI is the industry's first autonomous AI policy orchestrator. It provides full visibility into your AI inventory, derives enforceable governance policies from AI threats, compliance standards, and your own organizational policies, and applies security guardrails before your agents reach production — aligned with EU AI Act and OWASP AI Top 10 out of the box. See Lineaje UnifAI →