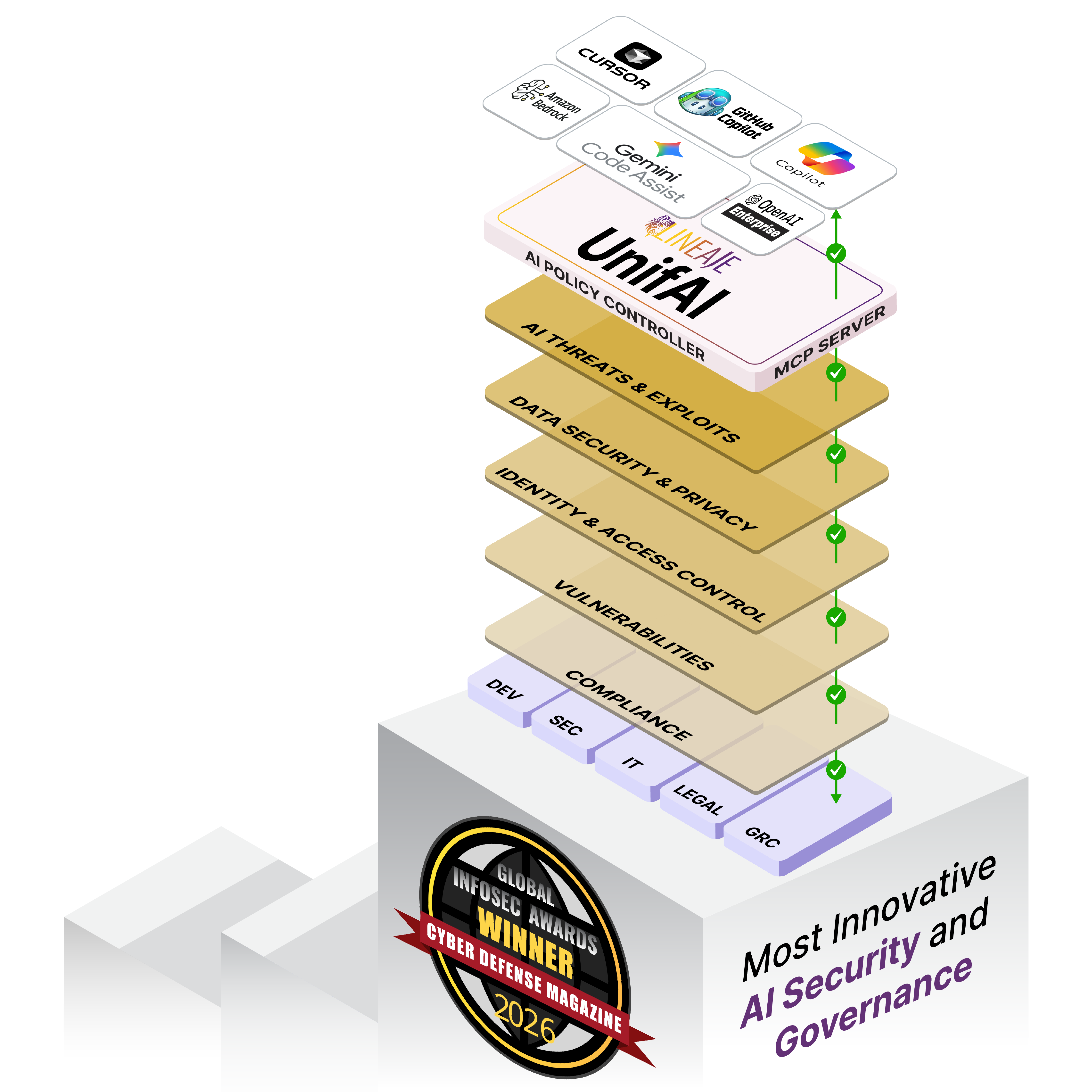

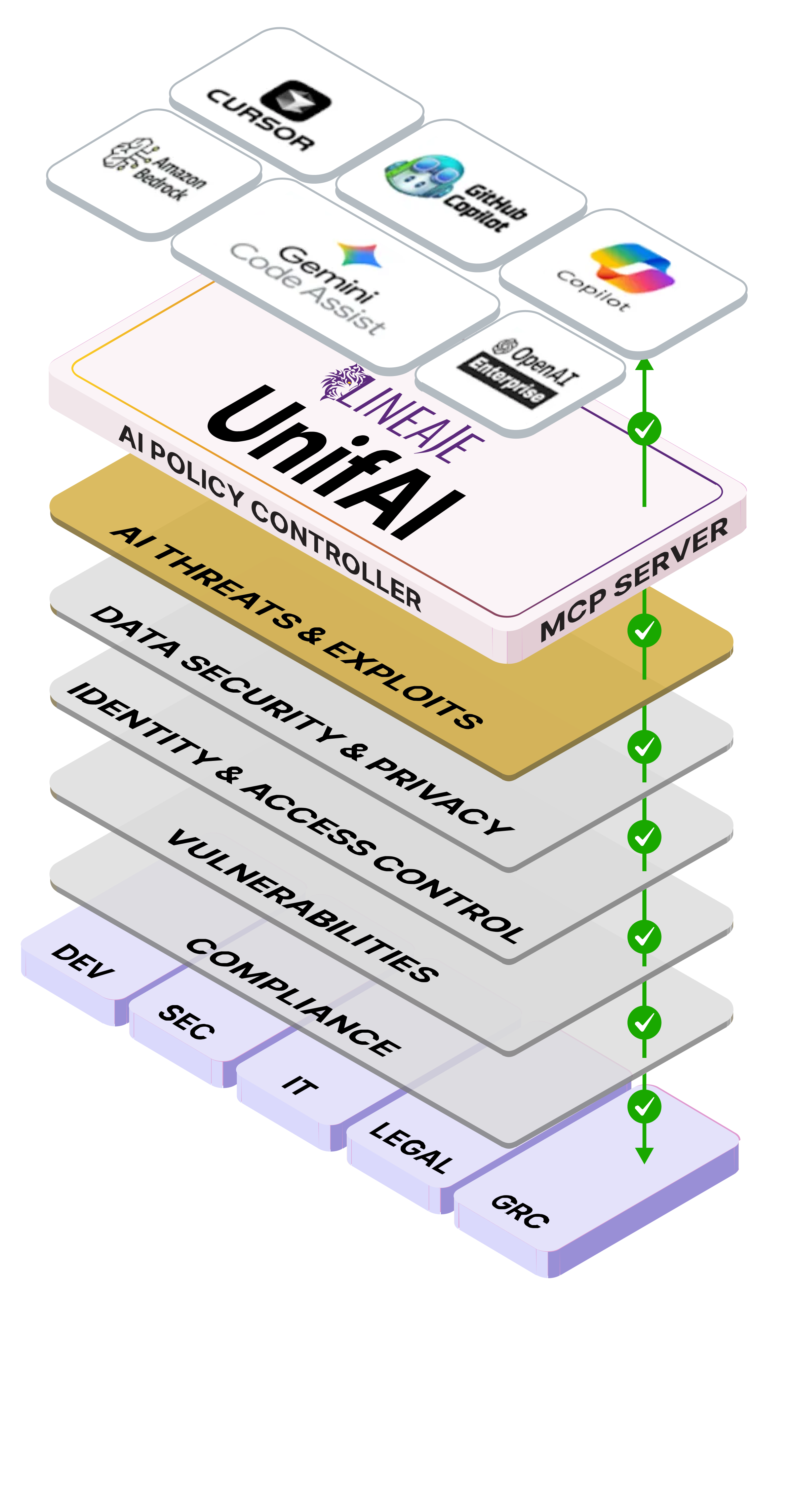

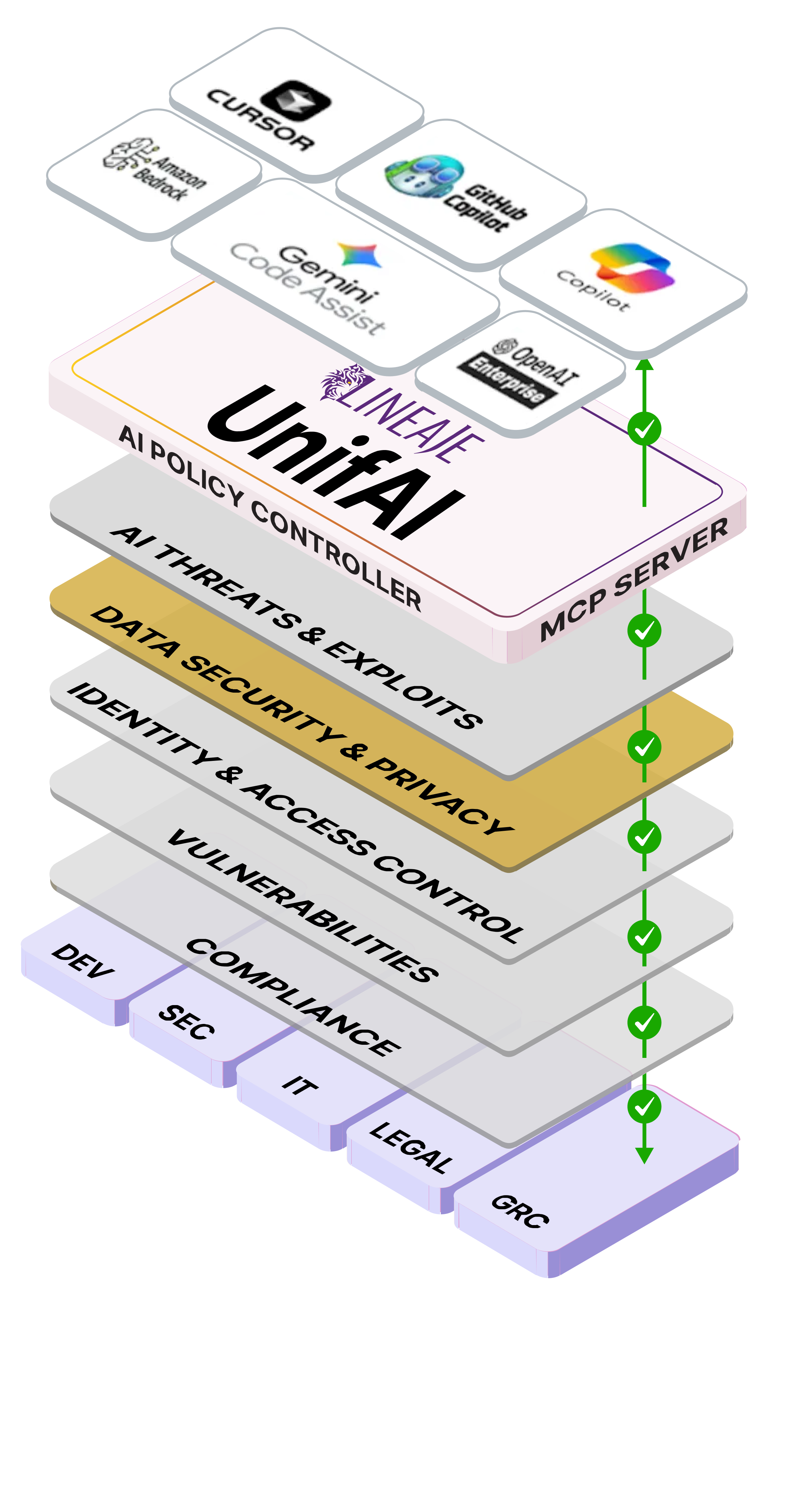

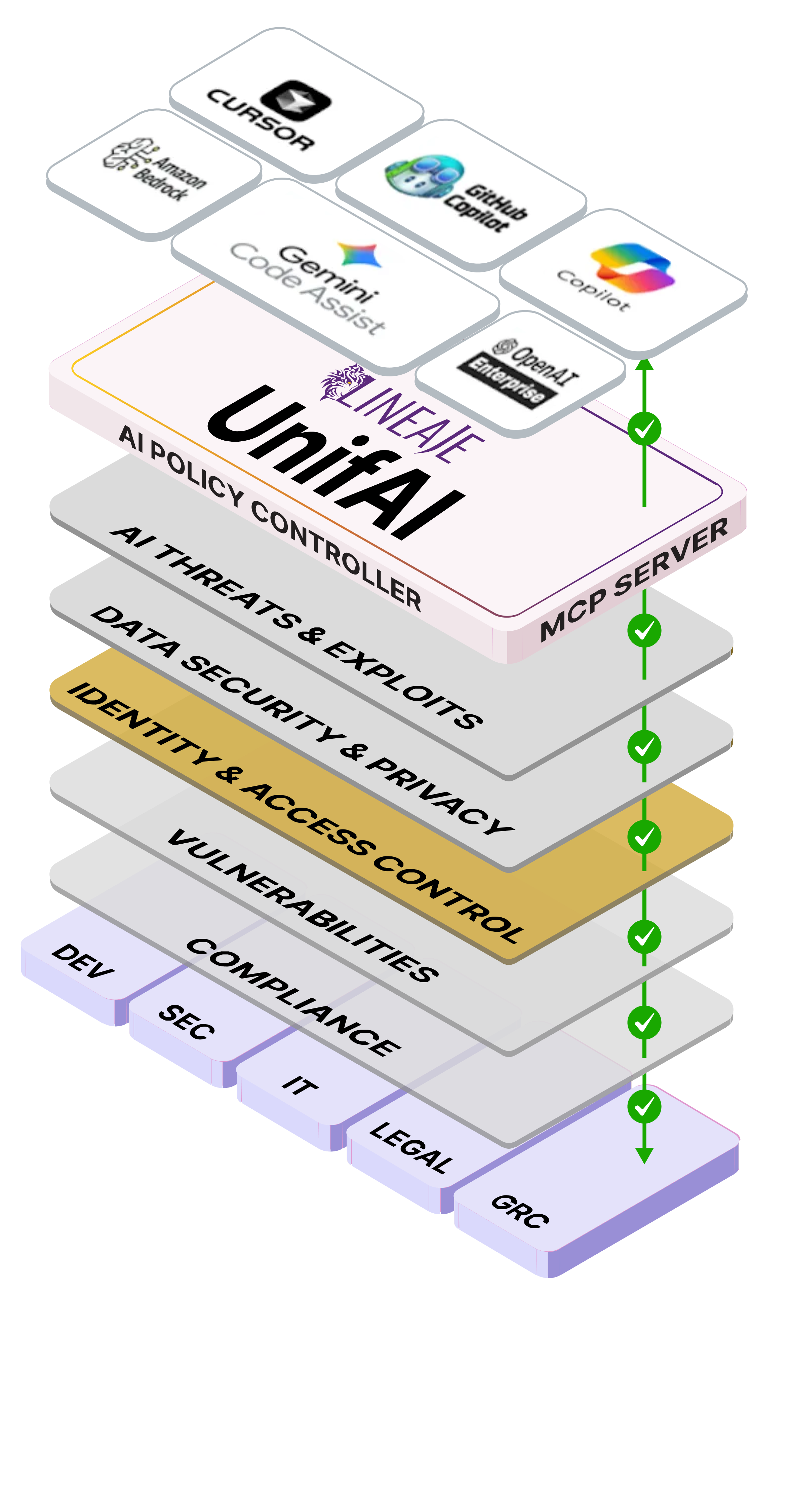

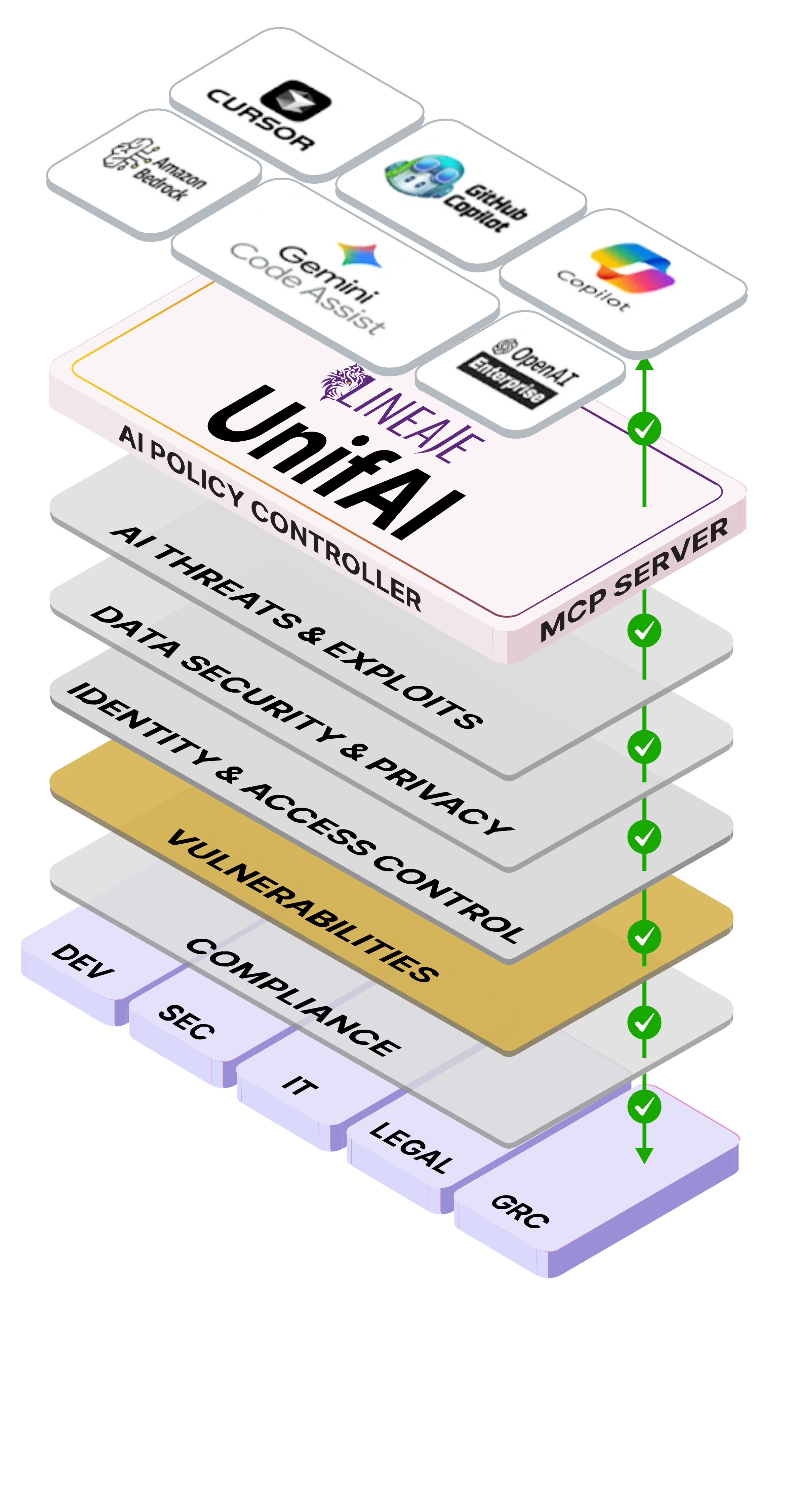

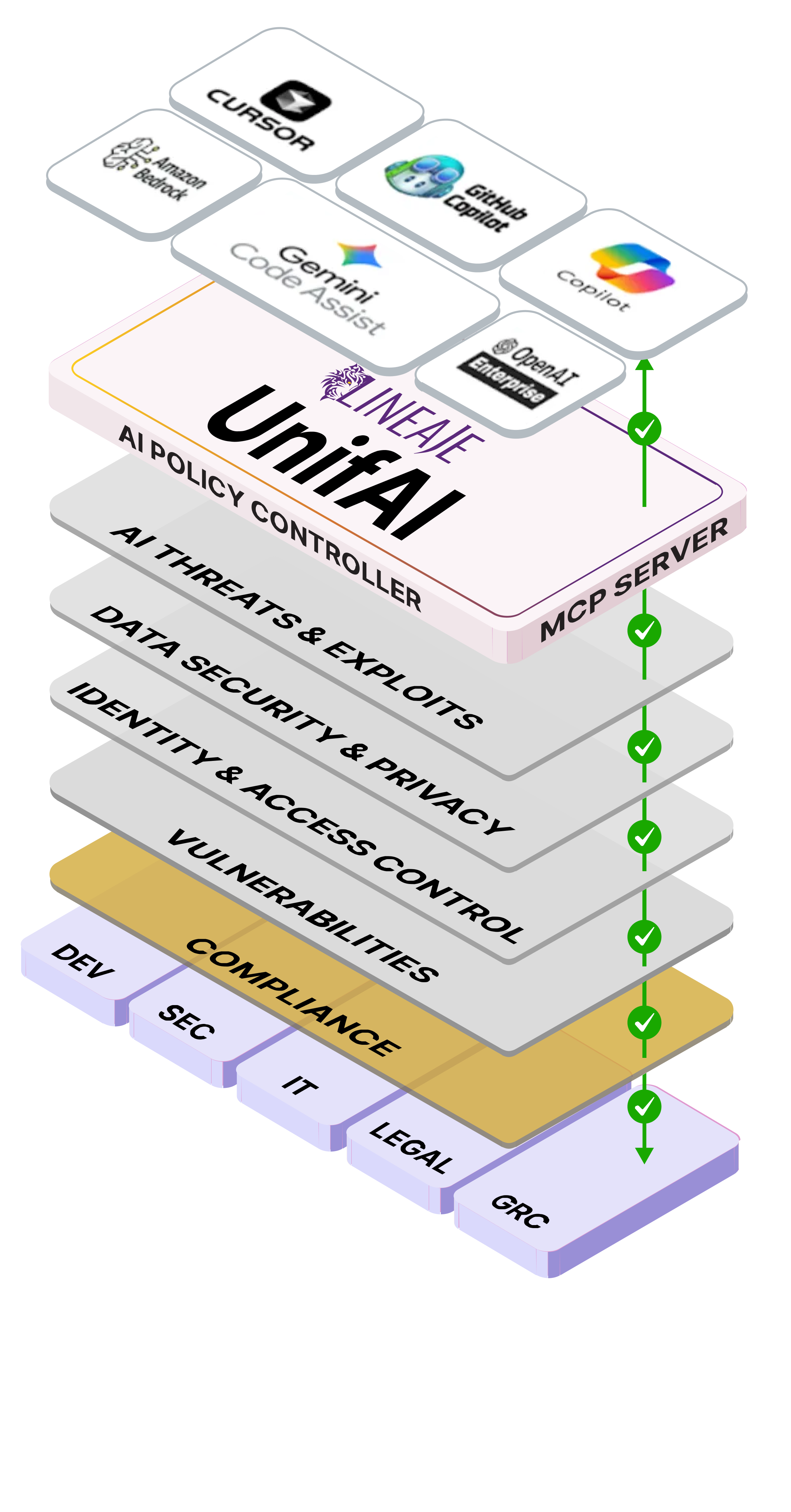

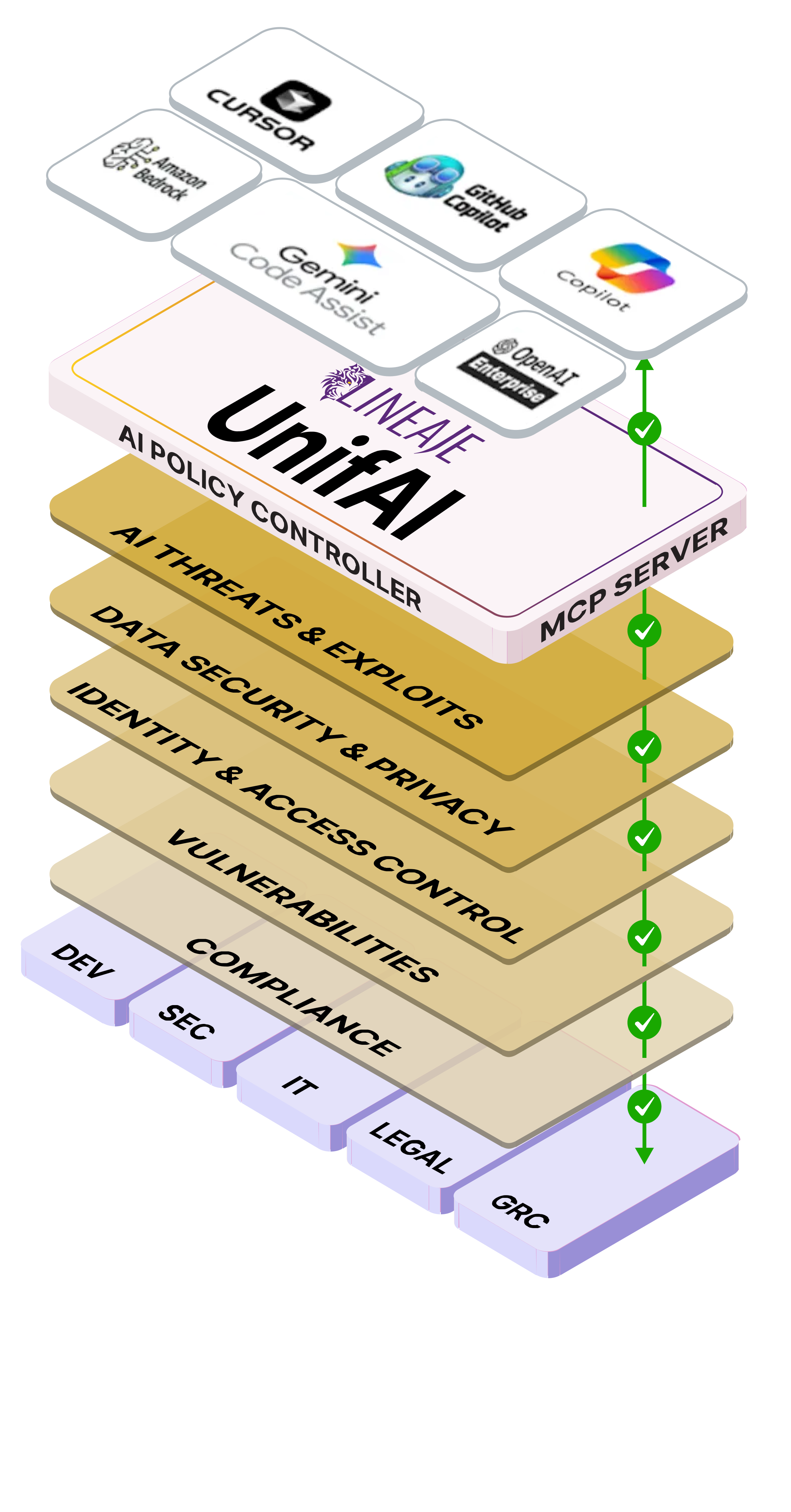

Identify all AI assets, and block malicious, hidden, or embedded active content or instructions. Identify all AI assets, and block malicious, hidden, or embedded active content or instructions.

Protect sensitive data exposure/IP, and stop PII leakage, avoiding compliance violations. Protect sensitive data exposure/IP, and stop PII leakage, avoiding compliance violations. Protect sensitive data exposure/IP, and stop PII leakage, avoiding compliance violations.

Define which users, services and agents may access specific LLMs, agents and MCP servers (A2A, A2MCP, A2LLM, and A2H). Define which users, services and agents may access specific LLMs, agents and MCP servers (A2A, A2MCP, A2LLM, and A2H).

Remove vulnerabilities before code commit, autonomously fix without breaking applications. Remove vulnerabilities before code commit, autonomously fix without breaking applications. Remove vulnerabilities before code commit, autonomously fix without breaking applications.

Align with critical frameworks, regulations & governance out-of-the-box (EU AI Act, OWASP AI Top Ten for AI & LLMs). Align with critical frameworks, regulations & governance out-of-the-box (EU AI Act, OWASP AI Top Ten for AI & LLMs).

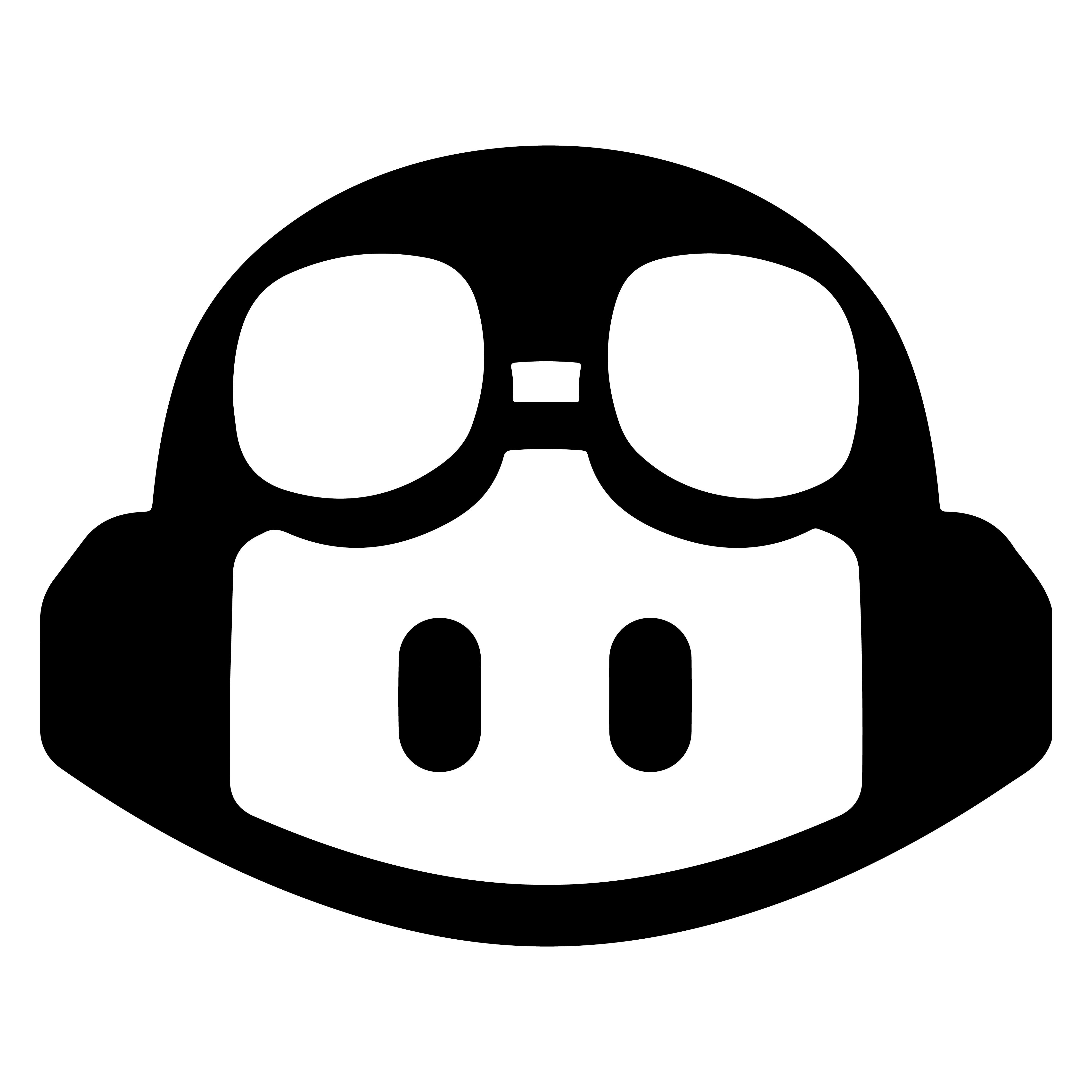

10

Actions on Objectives

9

AI C&C

8

Persistence

7

Lateral Movement

6

Privilege Escalation

5

Tool & Environment Interaction

4

Reasoning & Time Execution

3

Instruction & Weaponization

2

Trust & Manipulation

1

AI Recon